Agile Velocity Anti-Patterns

Agile Velocity is the most widely used-and-abused metric associated with Agile software development. Let’s take a quick look at some of the most popular abuses.

But first…

A Conversation

Nice to see you again. How are things going in your software development organization?

We’re doing Agile!

That’s great. Congratulations. What sort of process are you using?

What do you mean? We’re doing Agile!

I mean, are you using Scrum, or Extreme Programming, or something? Or did you craft your own process?

Well…um…what’s the one with Sprints?

That’s Scrum.

Oh, okay. That’s what we’re using, then.

I see. How are you planning and tracking your work? What sort of metrics are you using?

We’re using Agile metrics, of course. We’re doing Agile!

Sure. But which ones?

Well, the only one, I guess. You know. Velocity.

Right. Is your velocity stable enough that you can use it for forecasting?

Um…well…not sure what you’re asking.

Okay. Well, never mind. How long are your Sprints?

Two weeks…

<nodding/> That’s pretty typical.

…usually.

Um…what?

Two weeks usually, unless we have a problem getting all our commitments done.

Hmm. That’s interesting. How are you forecasting the amount of work you can complete per Sprint, if the Sprint length is variable?

<shrug/> Not sure what you mean by “forecasting.” We just estimate the time for each User Story and convert that into Story Points.

You start with a time estimate and covert it to Story Points?

Yeah. I told you we’re doing Agile, didn’t I?

You did, yeah.

It’s pretty simple. Four hours equal one Story Point. That’s just basic Agile, you know.

Of course. I must’ve forgotten that.

Estimates aren’t perfect. If it takes more than two weeks, then that’s just how it is. So we extend the Sprint until we get it done. Gotta be realistic, right?

Yep. Gotta be realistic. Well, at least you’re delivering a production ready solution increment in each Sprint.

Right! That’s the important thing.

Yep. So, you’re moving code into production every couple of weeks, unless it takes longer.

Right. Well…

Well…what?

We finish our part of the solution every couple of weeks, usually.

What’s your part?

The code.

So you deliver code, and nothing else, every two weeks…to production?

No, not directly to production. That would be crazy! No one does that. It would be impossible!

Where does the code go, then?

To the infrastructure team.

And they decide when to put it into production?

No. Aren’t you listening? We’re doing Agile! They move it into a test environment for the functional testing team.

Are you tell me that three different teams touch the code before it’s even tested for the first time?

Well, yeah. Of course. You’re making it sound like a bad thing.

True. Something tells me I should save the word “bad” for later.

That’s a good idea, yeah.

So, how are you using velocity to forecast…that is, to understand how much work you can agree to complete in the course of a Sprint?

Agree? Ha! The Product Owner tells us what has to be delivered. That’s their job. Haven’t you heard about Agile before? We estimate all the User Stories so we can fit the Story Points into the next Sprint.

Fit them.

Yeah.

And what happens if they don’t fit?

We discuss it with the Product Owner.

Well, that sounds promising!

Yeah. We collaborate to figure out how to make our estimates smaller, so the Story Points will fit into the Sprint.

But sometimes you find out they won’t fit after all. And then you extend the Sprint.

Now you’re catching on! That’s Agile!

So…forgive me for asking…if you have variable-length Sprints, you only complete a portion of the work before handing it off to another team, you adjust your User Story sizes so that a dictated amount of work will “fit” into a Sprint, and a Story Point is just another name for a time-based estimate, then what do you actually do with your velocity numbers?

We plot them on burn charts, of course! Burndown within the Sprint, and burnup for the release. You know, basic Agile! Our burn charts look really good, too.

No doubt. But what kinds of decisions do people make based on that information?

Um…well…not sure what you’re asking.

Velocity Defined (Sort Of)

Turning to that wondrous and beautiful information store, Wikipedia, I found a definition for velocity that reads more like a rant by someone who has had some negative experiences with velocity, and took those experiences to be the “definition” of velocity. Wikipedia entries can be changed, so we can hope for improvement. For now, let’s move past that one and keep looking for definitions.

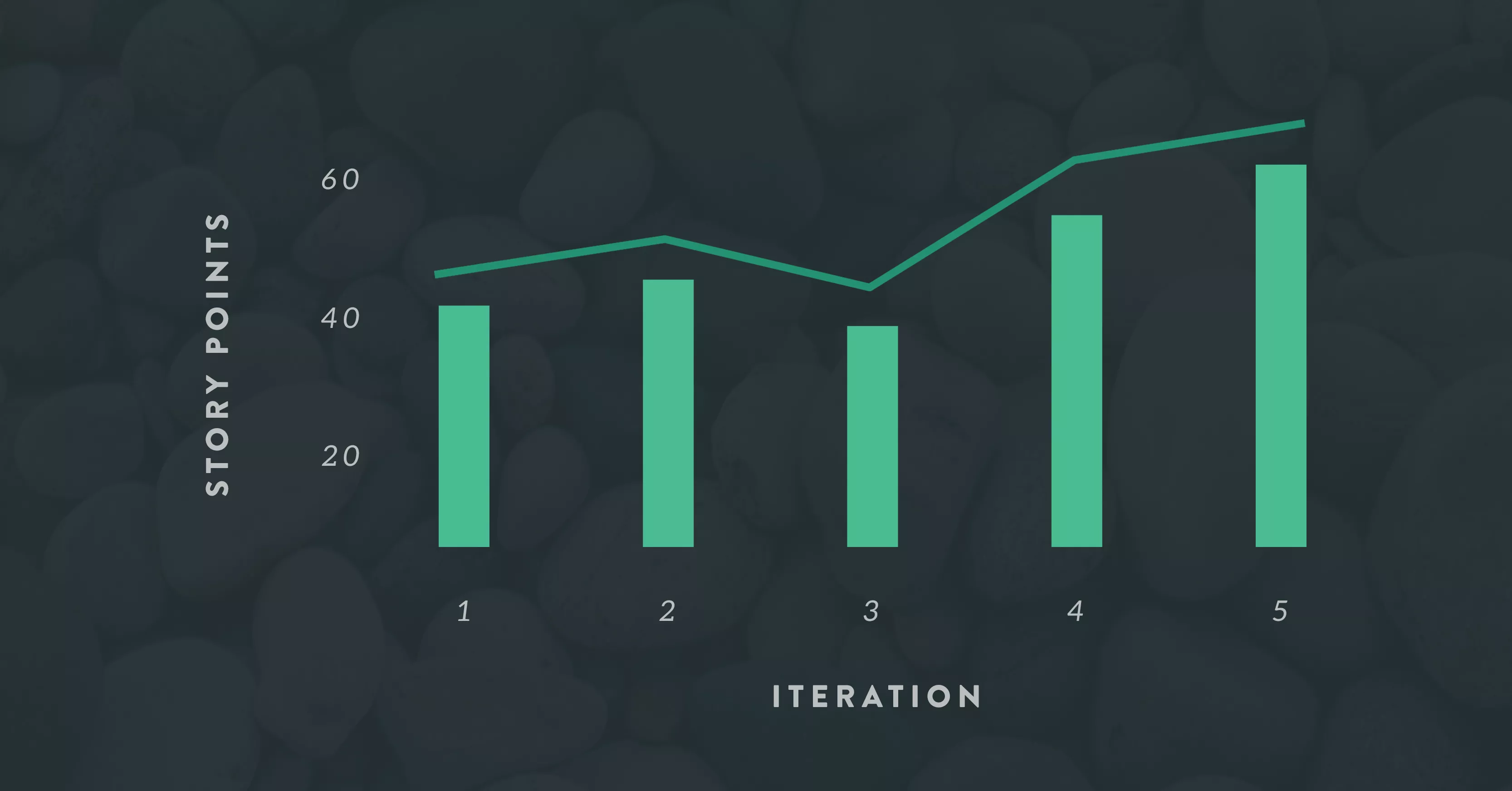

The Scrum Alliance has an article online by Catia Oliveira entitled, “Velocity”. Her definition reads as follows: “Velocity is the number of story points completed by a team in an iteration.” She adds a hand-drawn velocity chart which she captions, “Awesome handmade velocity chart.”

This is not false modesty on her part. It really is that simple.

Really.

I’m not sure why people would find that definition confusing. Actually, it could be Story Points, it could be the number of User Stories, it could be the number of estimated hours completed, it could be the number of production tickets closed…it could be any reasonable measure of the quantity of work completed. In any case, it’s just a simple count. One, two, three. And yet, velocity seems to confuse a lot of people.

Maybe the confusion stems in part from the word, “completed.” In this context, completed doesn’t mean that the code is ready for the infrastructure team to move to a test environment so the functional testing team can work with it. It means completed to the point that it could be released to production, if the key stakeholders make a business decision to release it. There are no technical steps remaining to prepare the code for release.

A lot of development teams operate in organizations that are only beginning to apply Agile methods, or that believe Agile methods have to be adapted severely to work “at scale.” Their organizational structure and assumptions about things like efficiency through role specialization or separation of duties according to Sarbanes-Oxley precludes their being able to deliver anything in a production-ready state.

But they think they are required to track velocity, according to the Rules of Agile.

So they make stuff up.

The truth is, if a team doesn’t deliver a production-ready solution increment at least once per iteration, they have no velocity. Let me be clear: I don’t mean that their velocity numbers are incorrect. I mean they don’t have any velocity numbers. They may be reporting something under the heading, “Velocity,” but it isn’t velocity. Can’t be. They haven’t really completed anything.

Agile Velocity Defined (Hopefully Better)

Oliveira’s definition is a practical working definition of velocity that enables teams to forecast the amount of work they are likely to be able to complete in the near future, based on empirical observation of their delivery performance in the recent past. The only expansion I would make to her definition is to note that Story Points doesn’t have to be the unit of measure.

So, velocity as such is not complicated. It does, however, have a key dependency. I’ve observed that velocity is only meaningful when the team uses a time-boxed iterative process model. Otherwise, velocity has literally no meaning at all.

A time-boxed iterative process model has two defining characteristics:

- the iterations are all the same length

- within each iteration, some piece of functionality has to be completed to the point that it could be released to production (or included in a product) with no additional technical steps to prepare it

If either of those things is not true, then the team has no velocity.

Anti-patterns

In my book on software development metrics, I suggest that there are two reasons to track metrics at the delivery team level: To steer work in progress, and to quantify the effects of process improvement efforts. There are other uses for metrics at higher levels in the organization, but at the team level those are the main uses.

Velocity can be used to steer work in progress provided it is used as intended. Knowing how much a team generally delivers in a unit of time gives us a basis to predict when a given amount of scope is likely to be completed, or to plan how much scope can be completed by a target delivery date. But when teams are not using a time-boxed iterative process model correctly, then whatever they are reporting as “velocity” will not be useful; it may actually be misleading.

Velocity can be used to quantify the effects of process improvement efforts provided it is used as intended. Provided a team doesn’t fudge the numbers to avoid punishment, then we can observe improvements in velocity over time. If a three-point Story takes a team eight days in March, and four days in October, it sure looks as if they have improved something, assuming they aren’t gaming the numbers.

In the book, my approach is to explain how a metric pertains to steering work in progress or process improvement, the mechanics of collecting and interpreting the data, and common anti-patterns; that is, abuses of the metric.

It turns out that velocity is particularly prone to abuse. It seems that “everyone” wants to be Agile, and people typically learn that Agile requires tracking velocity. So they start tracking velocity from Day One, even though they may not (yet) be able to deliver a production-ready solution increment in the space of a single iteration. There may be years of organizational changes ahead before they reach that point.

But they optimistically start tracking velocity anyway, even though they don’t have any. And that leads to all sorts of problems. The key anti-patterns listed in the book are:

Setting targets for agile velocity. Velocity is an empirical observation of a team’s actual delivery performance; it is not a goal to aim for. If you set targets, your teams will simply adjust their Story sizes so that they appear to hit the targets. Nothing of substance will change.

Substituting velocity for percentage complete. In a linear process, it’s sensible to track the percentage of scope completed to date. When organizations first apply Agile methods, they often re-name this metric “velocity” in order to “sound more Agile.” As they aren’t (yet) using time-boxed iterations properly, it’s only a name game.

Instantaneous maximum agile velocity. A team has to settle in for several iterations before we can get meaningful observations of their delivery performance to use in forecasting. A common mistake is to assume a team can achieve an arbitrary “maximum” velocity from the very beginning. Organizations that make this error tend to plan too much work for their first release or two. When it doesn’t happen, they start to wonder whether Agile was such a good idea in the first place.

Projected performance based on wishful thinking. Sometimes, stakeholders try and convince teams to adjust their estimates so they can get more done. This approach doesn’t actually result in more getting done, Agile or not. In many cases, teams try to achieve (or are asked to achieve) so-called “stretch goals.” In effect, we are ignoring the reality of the team’s demonstrated delivery performance and substituting wishful thinking for it. A team can look for ways to improve its process or its technical practices, but they can’t simply “stretch” their true delivery capacity any more than we can stretch a 12-ounce glass bottle so it will hold 24 ounces.

Comparing agile velocity across teams. Organizational leaders need to be able to roll up metrics from the team level to an executive dashboard, possibly traversing intermediate layers of the organization along the way. It’s common for them to assume they can do the same with velocity. But velocity is highly dependent on how each team operates, as well as on the nature of the work they perform. Velocity observations cannot be compared meaningfully across teams. One thing organizations do to try and make it possible is to “normalize” Story Points for all their teams. The practical effect of doing that is to make all the velocity measurements completely meaningless. Any forecasts based on such measurements are likely to be wrong. Might as well flip a coin.

What are We Supposed to Do, Then?

If you can implement a time-boxed iterative process model, regardless of the specific method you choose (Scrum, XP, etc.), then velocity observations will be usable for purposes of steering the work and quantifying process improvement. If this is not feasible in your organization, either by management intent or due to the challenges of restructuring the organization toward an Agile model, then consider alternative metrics that serve a similar purpose but that don’t have the dependency on a time-boxed iterative process.

Metrics from the Lean school of thought are not dependent on any particular process model or method. They simply measure what happens. That means it doesn’t matter if some of your teams are doing well with Agile methods while others are struggling; if some of your teams are burning down a backlog of planned features while others are dealing with unplanned work such as production support tickets or infrastructure requests; if some teams are able to move their product all the way through the delivery pipeline independently while others need support from specialized teams. It also means it will be possible to roll up team-level metrics to the executive dashboard without losing fidelity or meaning.

Take a look at the following basic Lean metrics and see if they appear to meet your needs:

- Lead Time

- Cycle Time, including mean CT and CT variation

- Cycle Efficiency, a.k.a. Process Cycle Efficiency

- Queue Depth

Conclusion

Agile Velocity is probably the single most abused metric associated with Agile development. Bear in mind it is not mandatory to track velocity in order to “be Agile.” If your team satisfies the dependencies for velocity to be meaningful, it may be useful to you. Otherwise, look for metrics that make sense in your context. Be practical.