What’s Worse Than Not Automating Your Software Delivery Pipeline?

Okay. Sounds good. What are “all the things,” by the way?

Well, kind of a lot of things, actually. Maybe one or two more than most people think about when they first get inspired to automate their pipeline.

Automate why?

There is value to an organization in having the capability to deliver continuously even if customers are not able to consume change frequently. An unattended software delivery pipeline frees technical staff to spend more time on value-add activities rather than tediously performing repetitive tasks by hand, such as

- Reviewing code by eyeballing it to ensure compliance with coding standards

- Handling merges of code changes made by more than one person

- Checking that application functions return expected results when given predictable inputs under well-known conditions

- Provisioning and configuring environments

- Preparing test data to exercise applications prior to deployment

- Creating or modifying database schemas

- Monitoring production systems to detect common and predictable problems

But there’s a risk that people will get excited about automation and get ahead of themselves. I remember a talk by John Seddon years ago (I can’t find it online just now) about a small company that sent repair people out on trucks to fix things at people’s homes (or something like that).

The company wanted to automate their dispatching process. They asked a software developer what it would take, and he estimated something like 20,000 pounds over several months. Seddon’s company, Vanguard, advised them to wait. Instead of automating their current process, they advised the company to set up a paper-based procedure that would be easy to change or discard, and work out any kinks that way. Once they had a working system, they could automate that system instead of the one they currently had.

They followed the advice. When they had their process worked out using notebooks and slips of paper, they were ready to automate. It ended up costing them about 4,000 pounds and was implemented in one month. Even better: It was the right process.

Before you jump on the automation bandwagon, I suggest you consider two things:

- Be sure you understand what “all the things” really are; there may be more than you realize initially; and

- Be sure you’re doing things in a way that won’t blow up in your face when you automate the process, because whatever you automate will happen a whole lot faster than it did before.

Automate what?

Requirements & validation

The popularity of Specification by Example and several identical or nearly-identical practices such as Acceptance Test Driven Development and Behavior-Driven Development has led people to rush into it, perhaps hoping to reap the benefits quickly and easily.

Two closely related conceptual errors appear to be quite common. First, there’s a tool-centric mentality about software-related work. People choose products before they’ve worked out exactly what they want to do. Second, there’s a rush to automate. People take a tool that can support Specification by Example and the first thing they do is try to write executable scripts. But what are they scripting? Have they clarified intent with stakeholders? Are stakeholders involved in defining the examples? Have they figured out which scenarios are worth automating? Have they settled on consistent terminology to use in writing the scenarios?

It often happens that teams become disillusioned with the tools and (by association) the practices because they find themselves having to discard and re-write scripts over and over again. But that isn’t a “tool” problem.

Solution architecture & design

If your application is designed in such a way that significant manual effort is required to implement most any change, then when you automate the process and begin to deliver frequently or “continuously,” the level of manual effort may become a bottleneck to the company.

An unattended CI/CD pipeline has to satisfy the needs of all stakeholders without requiring a halt for manual review and approval. As a general rule, anything that requires a halt and manual review is probably designed in a suboptimal way. We want to “bake in” everything stakeholders need, so that automation will not result in the rapid generation of old problems. This may include such considerations as:

- logging and reporting to satisfy internal and external auditors

- verification of compliance with industry regulations

- validation of consistency with the company’s branding standards

- built-in and verifiable support for well-known security considerations

- automated fraud detection

- observability, to support automated production system monitoring and recovery

- self-checking of operational health with appropriate logging and notification support

- instrumentation to facilitate analysis and correction of production issues

- feature toggles to enable frequent deployment, including of incomplete features

- loosely-coupled components that can be deployed independently of one another

- runtime enforcement of API contracts

- killability, such that the application does not require a special shutdown procedure and does not leave databases and files in an inconsistent state when suddenly halted

- accept injection of external dependencies such as the system date and time, database connection URLs, and logging services

- consistent exception handling throughout

- other considerations depending on context

You might point out that sounds a lot more complicated than the actual logic in any given “business application” system. Yes, that’s true. We’re already doing these things today; we’re just doing them manually. If we want to automate them, then there will have to be code in place to do the same jobs. And some of it is, indeed, more complicated than typical business application logic.

Are you worried about code bloat? Try installing a typical business application on a pristine development environment. Run a build using a tool like gradle, bundler, or npm. Watch the console as the tool downloads dependencies. You’ll probably notice that very little of the code in your application is actually your application code. Most of it consists of libraries and framework code. Now you might have to add a little more logic to make your application pipeline-friendly. Is that really a big deal?

Building software

Circa 2018 this might seem rather obvious, but one aspect of automating a software delivery pipeline is to automate the build process for the application. This involves managing dependencies among software components and libraries; running tools such as linters, compilers, and linkers; packaging binaries and resource files into deployable units; defining and loading data stores; and other tasks depending on the nature of the technologies involved.

It’s common for applications to be built using a tool that manages dependencies and produces deployable artifacts. But that’s only part of the picture.

Code review is an effective way to minimize problems in application code, from structural issues to consistency with standards. Unfortunately, many teams that have other parts of the delivery pipeline automated are still using manual methods for code review. That leaves them open to human error.

These teams often schedule code reviews either at fixed intervals or on demand when developers have code they want to be reviewed. That approach leads to interruptions in work flow as well as a bottleneck, as the individuals authorized to perform code reviews are usually in short supply and have their own assignments.

Collaborative development methods such as pairing and mobbing provide for real-time, continuous code review as well as knowledge transfer from the rare “senior” team members to everyone else. The latter effect leads to fewer discrepancies between standards and the code people write.

Including appropriate tooling in the automated CI process covers most of the remaining risks in this area. The build should include style checking, linting, and static code analysis in addition to compiling, linking, and low-level automated checking.

Creating environments

I’ve heard quite a few teams say they have an automated CI/CD pipeline in place, only to find out on closer inspection that there are manual steps. They might have to copy production data and modify it to prepare a test environment; often this requires them to modify test cases, as well. They might have a set of static environments predefined for them, into which different development teams take turns loading their applications.

To achieve unattended software delivery, whether to a staging environment or all the way to live production, we can’t have manual steps in the process. Test data must be generated based on the conditions the test cases require. Such data must be designed to exercise the application appropriately, and not merely reflect patterns of data that happen to be present in production this month.

It isn’t reasonable for (say) ten development teams to share (say) two statically-defined test environments whose configurations differ wildly from production. There’s a potential for old versions of libraries to be present in the environment and for other configuration differences to result in false positives or negatives, leading to surprises in production.

I often hear people say they can’t afford to create consistently-defined environments throughout the pipeline. They might make a puppy-like “you-know-what-I-mean” face and rub their thumbs and forefingers together in a gesture that means, “money,” as if that explained it away adequately.

Sorry, friends; that doesn’t explain it away adequately. If you do the math, and I mean the kind of math Troy Magennis and Don Reinertsen do, you’ll see that you can’t afford the cost of waiting for a statically-defined, shared environment to become available to your team, or the cost of discovering and fixing problems that only appear after the code is in production. You can’t afford not to have consistent environments.

An unattended CI/CD pipeline requires all environments to be built on demand based on configuration scripts that are maintained under version control alongside the application code and test code.

To paraphrase a US commercial for a weight-loss program: “It’s not that hard. You write the scripts, you lose the wait.”

Software testing (checking)

Although the term “automated testing” is questionable, test automation continues to be a strong focus in software delivery these days. There’s even a special job title, “Automation Engineer,” which doesn’t particularly imply much about either automation or engineering, as far as I can tell.

Whatever you want to call it, the removal of manual tedium for routine functional validation is a fundamental piece of the continuous delivery puzzle. It has a domino effect of benefits, starting by ensuring consistency across all executions of the test suite and ending by giving testers more time to perform the value-add work they should be doing instead of manual validation.

One reason for the confusion in terminology may be that the same activity and the same tooling may be used for multiple purposes. For example, a tool on the general level of Selenium, Postman, SoapUI, FitNesse, or Cucumber might be used to help express customer needs and achieve common understanding among stakeholders.

The same tool might be used by programmers to help drive out an appropriate design for a solution.

The examples they create may then be used as a regression suite to guard against introducing new errors in existing functionality going forward.

The same examples, or at least the same tooling, might be used to support exploration of the system by professional testers.

When the solution nears end of life, the same suite of examples could be used to guide the development of a replacement solution.

So, is it a communication tool, a design tool, a regression checking tool, a testing tool, or a specification tool? Maybe it depends on what you’re using it for at the moment. The terminology is less important than the substance: Automate repetitive, predictable activities so you’ll have more time for real value-add activities.

Security review

Many organiztions have a manual review step in their delivery process when security specialists examine the proposed application changes to look for security vulnerabilities. This manual step can be replaced by automation in most cases, for most of the necessary security checks. Good CI/CD pipelines often include a “security scan” step during which automated tools check the code for known issues.

Defining the rules for the tools to use is not as difficult as it might sound. Human security specialists are using a set of known rules and guidelines when they examine software. Those rules and guidelines are not “secret.” Security specialists can configure, or help technical staff configure, the tooling to scan for vulnerabilities.

No one can check for unknown issues, even using manual methods, so no risk is introduced by automating this step.

It’s often considered good practice to involve security specialists early in each project, rather than waiting until most of the time and budget have been used up before they have a chance to perform a review. It’s a short step from there to getting their input into configuring automated security scanning tools.

The same rule of thumb applies to other governance reviews, as well. When a reviewer uses a set of documented rules to conduct a manual review, the same rules can usually be applied by an automated tool. With that level of automation in place, stakeholders can have confidence that very few problems will slip through to production, and those can be detected by after-the-fact spot checks rather than by halting the delivery pipeline.

Releasing software

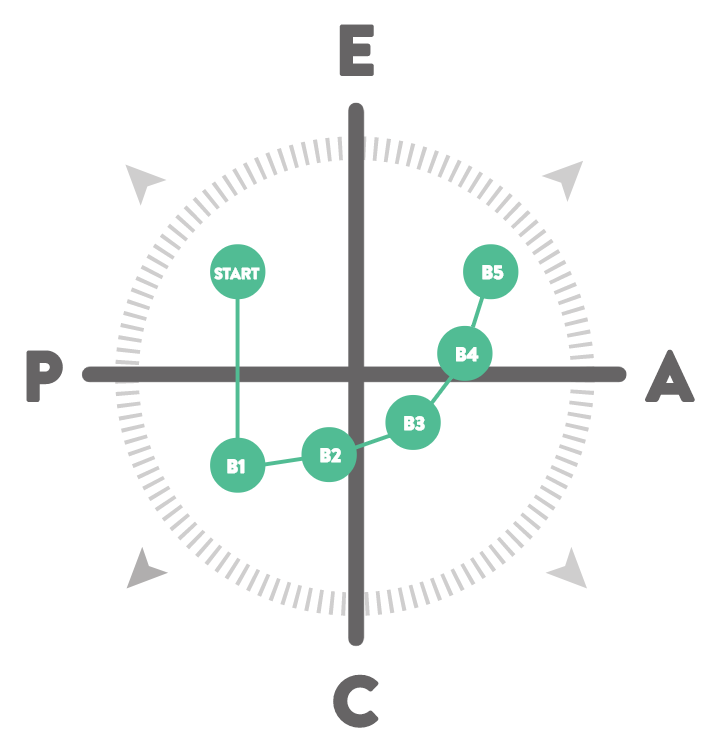

In a continuous deployment system, software changes automatically go from commit to staging with no halts (unless there are errors). In the context of the LeadingAgile compass, this level of automation would support a Basecamp 4 organization.

In a continuous delivery system, software changes continue all the way to live production without human intervention. In the LeadingAgile model, this level of automation would support a Basecamp 5 organization.

Usually, by the time an organization has automated most of the rest of the CI/CD pipeline, automating the final step is pretty simple. It’s just another environment to be generated on the fly by the same scripts that generate all the other environments along the way.

Additional steps for the production environment may include blue/green deployment, canary deployment, smoke testing, A/B testing, and so forth.

Operations

The tools and methods that help us set up an automated delivery pipeline for software can also help with operations.

If we can build environments on demand in a matter of seconds (or maybe minutes), then we need not keep any given production environment “live” for an extended time. The infrastructure can handle dynamic destruction and creation of OS instances and application components as VMs and/or containers. This can provide end users with the appearance of 100% availability while simplifying matters such as system updates, variable demand, and security exploits.

Cloud service providers aggregate logs so that we have a consolidated view of activity compiled in a way that is meaningful for tracing the behavior of a single application, even if that application was “up” while the OS instances that hosted it were destroyed and recreated many times.

Some of the contemporary approaches to operations include ideas like infrastructure as code and phoenix servers. It’s possible to set things up such that no VM has a root account. That helps defend against hackers. When all servers of a given type are generated from the same configuration script, there can’t be any question of inconsistent definitions. When servers are routinely destroyed and re-created, even when no application changes have to be deployed, there can’t be any configuration drift.

The wrong reasons not to automate

I’ve touched on some of the right reasons to think carefully before automating. But in my work I sometimes come across organizations where they cite the wrong reasons not to automate. It is an odd corner of human psychology that might have interested Rod Serling. Let’s explore it a bit.

Maybe in an ideal world, but not here

Well, sure, that’s fine in theory, but out here in the Real World™ it would never work.

That’s the Universal Excuse™ in a nutshell. How ideal does the world have to be before we can automate our software delivery process? The good news is the world doesn’t have to be perfect.

We’re in a regulated industry

This concern usually arises in financial companies, but it can be relevant in other industries as well, such as energy and defense, as well as in governmental organizations.

Compliance became especially important to IT organizations after the passage of certain laws intended to provide improved security for investors, including Sarbanes-Oxley (US, 2002), and the Gramm-Leach-Bliley act (US, 1999).

Regulations are also in place to protect the personal information of individuals, including a wide range of guidelines for the protection of personally identifiable information (PII) such as the one published by the US National Institute of Standards and Technology (NIST). The recent passage of the General Data Protection Regulation (GDPR) by the European Union extends the protections against abuse of personal information.

A number of other regulations apply to financial institutions, procedures, and transactions, as well. All these are intended to increase the confidence of investors and customers in the soundness of the economic system.

For IT organizations, the regulations pertaining to their industry usually boil down to a couple of relatively simple concepts:

- Ensure no single individual working alone can alter financial data; and

- Ensure personally identifiable information (PII) of customers, suppliers, partners, etc. is not exposed to anyone who is not expressly authorized to see it.

The first concern is handled by ensuring that any transaction resulting in the movement of funds has to be touched by at least two people. This is a procedural matter in business operating units of the company. As we in the IT field write the applications to support those operations, we have to be aware of the regulations so that we will not design applications that enable a single individual to alter financial records, working alone.

The second concern affects us in the IT area because we must be sure (a) production systems do not display PII information in the clear, and (b) test data does not contain any PII. It’s pretty easy to satisfy those requirements.

Regulations in other industries often pertain to the safety of the products and services offered, environmental stewardship in the manufacture of products, safety for the users of products and services, and protection of worker rights. Some of those affect the way we carry out our work in the IT department.

You probably remember the case of the Volkswagen software engineer who was sentenced to prison for writing code that enabled vehicles to pass emissions tests fraudulently. This happens rarely, but we might expect to see an increase in cases like this. Software developers must be diligent about ethics when working on code that has safety implications, such as balancing loads on tractor-trailer vehicles, operating medical equipment, or navigating self-driving cars.

Most organizations implement the concept of Separation of Duties to help them comply with regulations like these. The idea is that more than one pair of eyes has to have a look at anything that might run afoul of formal regulations, safety, or general ethical concerns.

And thus we come to the typical excuse not to streamline the software delivery process: Separation of Duties is misunderstood to mean each activity involved with creating a software solution must be carried out by a different individual or a different team. It’s not okay, for instance, for an individual to perform both the programming and the testing of a software feature. Similarly, it’s not okay for software developers to know the rules that security specialists use to review code.

There is one small problem with this argument.

It is not true.

Organizations can separate the development network (or subnet) from the production one, as well as separating test and production databases, test and production instances of back-end systems, servers, service APIs, mainframe LPARs, and other resources. Development teams have no need to access production data.

It is a fundamental error for organizations to depend on extracts of production data for purposes of testing and checking. This is one of the most debilitating misconceptions that is widely held in our field. It needs to go away. Test data must be crafted to align with test cases so that every execution of a test plan is identical. Otherwise, we might as well not bother testing at all.

In my experience, when people have insisted on using production data for test purposes, it has turned out that they did not really understand how the systems under test worked, what the data could look like, or how the data flowed within a large system or between dependent systems. In those organizations, functional checking of system behavior between components and in end-to-end-transactions was handled manually. There was low confidence that test scenarios could be duplicated reliably.

The uncertainty leads to fear, and the fear leads to a perceived dependence on production data for testing. After all, the systems work with production data, even if we aren’t sure why.

The cure is to eliminate the sources of fear by creating intentionally-designed test cases that use intentionally-designed data. It’s quite possible this will have to be done incrementally over time, as we learn more about the systems and the data. A gradual approach is okay; sitting still isn’t.

Even production support activities can be organized in such a way that the engineers do not see live production data even as they resolve problems in live production systems. It takes a bit of effort, but more often than not it’s worth the cost.

But…but the auditors…the auditors!

Talk to your auditors and find out what they need. You might be surprised. Typically they aren’t interested in imposing draconian rules and procedures. They need reports that provide evidence (a) a defined process exists and (b) people are following it. Most of that sort of information can be generated automatically by the various tools in the CI/CD pipeline.

The separation of duties is between production and non-production; not between various sub-disciplines of software work.

Compliance doesn’t have to be assured by stopping the work and waiting for a human to eyeball it. Most compliance requirements can be baked into the process and satisfied automatically.

Our product combines hardware and embedded software

This excellent article by Yaniv Nissenboim describes the value, work flow, and infrastructure for a practical CI/CD pipeline for embedded software products. David Rosen, in a less detailed article on the subject, touches on the issues of legacy support and regulatory compliance. “Legacy” is of particular importance in this space, as you can never deprecate an old version of anything that is running in a physical product out in the world. Jakob Engblom of Intel, which has a strong interest in the subject, adds more perspective to the topic.

James Grenning, a well-known leader in the embedded software field and the author of Test-Driven Development for Embedded C is also among the authors of the Agile Manifesto and an early adopter of Extreme Programming (XP). He has been applying ideas of continuous integration and continous deployment to embedded systems, and teaching same, for many years.

I don’t want to understate the challenges in this area, but neither do I want to acquiesce to the common excuse that it’s “impossible” or “too costly” to take this approach in the embedded space. Embedded systems are delivered this way in several large companies today, spanning applications from agriculture to aeronautics. There’s no reason the rest of the alphabet can’t be covered, as well.

We have to test against a gazillion browsers/devices

The proliferation of browser products and versions, OS platforms, mobile devices with numerous unique characteristics and Web and mobile technologies means that no software targeted to these clients can be fully tested against every permutation. Many people use this as an excuse not to try and automate their CI/CD pipelines.

This excuse strikes me as especially odd in view of the fact an automated suite of functional checks could discover any problems much, much faster than manual checkers could possibly do. The more different combinations and permutations you need to check against, the more you need automation, not less.

Checking against a large number of platforms and software versions in house could be a crippling expense. Fortunately, many companies exist whose sole business is to run exhaustive automated checks of their clients’ applications against a massive number of platform and software combinations. These services are available for both Web browsers and mobile devices. Use them.

But the cost…the cost! Sure. Find out what it costs. Then compare that against the cost of not having adequate testing on your product, and/or not being able to complete your test suites manually in time to make a difference in the market.

We have COTS packages in the mix

Most organizations of appreciable size run a combination of home-grown and third-party applications (commercial off-the-shelf systems, or COTS) in production. This isn’t strictly a “legacy” concern, but some of the older COTS products were designed in an era when a monolithic architecture made good sense.

Some of these products are designed to be a platform for multiple solutions, like Salesforce, SAP, Siebel, Oracle Financials, Oracle Manufacturing, and a host of others (no pun intended). That characteristic often causes pain in organizations where people want to try to adopt agile development methods, with the team responsible for each hosted application operating autonomously and able to deploy incrementally without impacting the other teams.

There are also practical issues for creating automated unit test suites for applications that are embedded in one of these application platform tools, as well as application-related logic that is housed in business rules engines, data warehouses, data cubes, database stored procedures, ETL tools, integration servers, business process orchestration tools, or (yes) spreadsheets.

The primary excuse here is cost, and the primary cost is that of licenses for splitting the applications out into their own instances of the enclosing “platform” products. As usual, I advise assessing the cost of mitigating these solutions against the cost of not doing so. A Lean-style value stream analysis could reveal the cost of business as usual is much higher than expected.

An anecdote: I was working with a large company (about 123,000 employees in 150 countries) a few years ago. In one area of the business, they had six applications running on a Siebel platform. There was a transaction database and a decision support database, some custom work flows, and some custom forms. Each of the applications was supported by three teams in three geographical locations: One for the transaction database, one for the decision support database and forms, and one for the custom workflows.

The company had one production Siebel instance, one for testing, and one for development. The six-times-three teams had to time-share the development and test environments, and all their changes were deployed to production in a single mass at the same time every nine to twelve weeks. There was no way to roll back one of the applications alone; it was all or nothing. Usually that meant “all hands on deck” because “nothing was working.”

They asked me how they could streamline the workflow and move to a more “agile” delivery cadence. I told them the answer they didn’t want to hear. The first step would be to set up 18 Siebel instances: 6 each for development, test, and production. That would enable the six products to be managed independently of one another. From that point, they would be in a position to perceive further opportunities for improvement; opportunities that were not easy to see from their current state.

They balked. The license costs would be too great, they said. I painstakingly walked them through an analysis of the costs of their current methods. They nodded and said they understood. They decided to stick with business as usual indefinitely.

There are many other stories like that one. Another company had a major application whose logic was divided between Java code and Blaze Rules Engine definitions. The latter were shared across multiple applications. Their Java application was dependent on the rules engine and couldn’t be modified independently, or by the same developers. I’ve seen similar dependencies on WebMethods, BizTalk, and you-name-it.

Sometimes a specialized tool is a key part of a company’s operations, but in many cases companies make almost no use of the specialized COTS products. Someone did a good job in sales, and now the company has Product X. I’ve seen implementations of an integration platform that had a handful of flows defined, and nothing else. I’ve seen rules engines implemented that support maybe 20 rules, and the rules have to be run sequentially so the inference engine (the piece that’s worth the price they paid) isn’t even used. I saw one implementation of BizTalk where the team wrote a user exit to route all the traffic around the tool. The product was in place so the manager who approved its purchase wouldn’t look foolish to his bosses; there was no bona fide business case for a product of that type. This situation is very common. You might take a look at how COTS packages are used in your environment to see if the functionality could be migrated into “normal” code, where it’s more manageable.

In all these situations, there were ways to mitigate the impact on lead time of managing changes to the COTS package and the applications that depended on it. It may not always be cost-effective to “go all the way,” but it’s almost always cost-effective to think about practical improvements over the status quo.

The point here is: Don’t stop thinking when you encounter your first challenge. Sometimes the work is hard. There may still be value in it. You might never get to 10 deploys a day with a COTS package in the stack, but you can definitely do better than one monolithic deploy of 6 applications every 12 weeks. Those “extra” licenses aren’t extra; they’re the price of admission for an automated deployment pipeline.

Our systems perform heavy data analytics

Data analytics has become an important area in information systems. With respect to crafting test data intentionally around test plans, I often encounter the argument that it’s “impossible” or “infeasible” because the application has to apply complicated algorithms to large data sets. Sometimes the data sets are time-dependent, and the “only way” to get test data is to extract live production data and modify it.

Do you remember a post of mine from a short while ago about property-based testing? In it, I concluded that PBT might be useful in some circumstances, but the effort required to define meaningful data generators might make it less cost-effective in most situations.

Well, lookie here: A circumstance.

When people argue that it isn’t “feasible” or “practical” to craft data specifically to exercise complicated data analytics algorithms, they’re thinking about conventional example-based checks. And they’re right. It would be a monumental headache to try and create a comprehensive and meaningful set of examples for that sort of application.

But it is feasible to develop data generators that can produce a useful test data set based on the properties or characteristics of the data being analyzed.

It seems to me if you understand the “shape” of the data your application processes, then you can identify the salient properties of that data and write data generators for a PBT tool. You don’t have to be a quant yourself to understand just that much about the data; especially if you can ask quants to help you. If you don’t understand the shape of the data, the implication is you’re depending on production data extracts for testing because you really don’t know how to test the thing. That’s a learning opportunity, not an excuse to avoid automation.

We have snowflake servers

In one client organization, the infrastructure support group was responsible for about 100 Linux servers and about 65 Windows servers. Most of these fell into a handful of categories that could have been supported by a few Chef recipes. A few were one-off configurations supporting COTS packages that had dependencies on obsolete versions of libraries, or that couldn’t support TLS 2. But only a few.

They managed all these instances manually. There were no scripts under version control. Some engineers had written their own scripts to simplify their own lives, but almost all these contained hard-coded values and/or required interactive responses to prompts.

The excuse for not automating the provisioning of 95% of the servers was that the remaining 5% were snowflakes. No one in the organization knew how to build any of the snowflake servers from scratch. They had poked and prodded them over the years, making small changes to config files and applying patches.

It seemed a high-risk situation to me. Those engineers were just one hardware failure away from a very unpleasant weekend. If I worked there I would have been more comfortable building and rebuilding the snowflake servers based on scripts, even if each script was only valid for a single server, just so we would understand what was in them.

It seems to me the more of something you have to support, the stronger the argument in favor of automation, and not the reverse. Also, the more unusual or unique a snowflake server is, the more valuable it is to have an “executable build sheet” to pull you out of the ditch when it fails.

We have significant back-end systems

Large organizations that have been around a while often have significant investments in IBM mainframe (zSeries) technologies, and/or IBM midrange (iSeries), HP NonStop (formerly Tandem), and other platforms that don’t readily lend themselves to the “infrastructure as code” approach.

Mainframes don’t run in the cloud. In response to that statement recently, a friend of mine reminded me of the MacBook Pros that have been loaded with zOS. With 32GB of RAM you have a sluggish single-user mainframe-on-a-lap. Cool, but not a real solution. It’s conceivable that one day mainframes could run in the cloud. For practical purposes today, it’s not an option.

So, where were we? Oh, yeah: Mainframes don’t run in the cloud. So, what can we do with these systems? The first step is to determine which of the back-end systems, if any, need to be part of an automated CI/CD pipeline. That decision must be based on the business operations that are supported by the back-end applications.

Contrary to the usual excuses, the decision isn’t a technical one. It depends on business needs. Referring to the LeadingAgile compass, let’s consider how software has to be delivered to support business operations at each Basecamp.

If an application supports business operations anywhere on the adaptive side of the model, the lead times that are typical in mainframe shops (weeks to months) will not be adequate. Lead times will have to be reduced to days (Basecamp 3) or minutes (Basecamp 5).

Applications that support business operations on the predictive side of the model might benefit from improved lead times, or at least a bit more automated checking, but probably won’t need to move to a fully automated CI/CD pipeline. Lead times in the range of a few weeks will probably be sufficient.

Having determined an application needs to support frequent and rapid change in order to support business operations, we can further refine the analysis by looking at the different kinds of processing the application performs.

Don’t say “can’t.” Business needs drive this. The word “must” trumps the word “can’t.” Better figure it out. You might not find Open Source projects that do what you need to do, but don’t worry; zOS is a programmer’s playground. If you can dream it, you can write it.

Mainframe applications are either “batch” or “online.” The batch applications run periodically, possibly for several hours, and process large input data sets all in one go, usually by sorting, merging, and processing the data sequentially. If changes in application logic have to be deployed within a single day, this can usually be accomplished by improving automated testing for the application, and very little else. The deployment can occur at any time day or night except while a batch run is in progress. It’s not difficult to fit a daily deploy cycle into the batch run schedule.

“Online” applications support interactive transactions, business-to-business transactions, and decision support systems. They are typically available to end users all the time. They run under control of a so-called teleprocessing monitor or transaction monitor. Representative products include CICS and IMS/TM.

While it isn’t generally feasible to treat mainframe LPARs the same way as cloud-based VMs for purposes of “infrastructure as code,” it isn’t really necessary to create a whole new LPAR for each application deployment. It’s feasible to “clean” the configuration, if necessary, and move the application executables into production libraries. “Cleaning” or “resetting” a mainframe configuration generally amounts to deleting files and possibly restarting long-running jobs or subsystems. You don’t actually rebuild the system, as you might do for a Linux VM on a cloud service.

For CICS systems, it’s feasible to start new CICS instances and destroy old ones in much the same way as we manage VMs in a cloud environment. CICS is, in essense, a container, conceptually analogous to docker or rkt. It’s a big container, but still “just” a container. To “deploy” a modified CICS application, we can start up a new CICS environment, gracefully transfer client traffic to it, and shut down the old environment. The time required depends on the number of resources the CICS system has to initialize on startup, but it usually amounts to around 10 minutes.

Some clients have pointed out they can’t have development teams starting up CICS regions on demand because the variable workload could affect production loads and/or drive usage above the next price point in IBM’s pricing model. In these cases, it may be feasible to set up a fixed number of CICS environments and have development teams time-share them. Teams can shutdown, reconfigure, and restart the environment they temporarily “own.” This is slightly less seamless than an on-demand process, but may help with controlling workloads and costs while still providing sufficient deployment flexibility.

And yes, IBM and other mainframe-focused software companies do offer CI/CD tooling for that platform.

So, there aren’t really any excuses here. There are some practical limitations, but no genuine reasons to assume mainframe systems can’t participate in a CI/CD pipeline either alone or in conjunction with other platforms in the enterprise.

Conclusion

There are very good reasons to think before automating, lest you automate the wrong processes and end up generating junk at high speed. On the other hand, there are very few good reasons why automating “all the things” won’t be both technically feasible and cost-effective in just about any environment in just about any industry sector.

You’ve heard horror stories from organizations that had poor results from automation initiatives. Resist the temptation to use those stories as excuses not to automate software delivery and deployment in your own organization. Think it through.

Comment (1)

Bram

The John Seddon video you refer to may be Re-thinking IT, the keynote he gave at Öredev, really good: https://vimeo.com/19122939