Test Scope: What’s the Scope of a Unit Test?

“Unit test scope” is a funny term. It seems to be the subject of all kinds of debate. Most of that debate is unresolvable, as it involves personal opinion and emotion above all else. Most of the issues people have with the term come down to just two things: The word “unit,” and the word “test.” People can accept the other words in the phrase, as long as we remove those two.

Some people object to the term unit test because of the word “test.” An executable unit test is really a functional check; not the same thing as testing the software in the true sense. I agree with that. However, most of the world doesn’t use that terminology, and I’ve learned it’s all but impossible to change entrenched industry lingo, so I’m going to write “unit test scope” in this piece, even though you and I know all the examples here are really checks. It will just have to do.

Some people find value in unit tests and others don’t. Okay, live and let live. I’m in the “finds value” group. I’m not going to debate it here. It’s a “given” for purposes of this post that unit test scopes are valuable. In fact, I’ll go so far as to assert it’s even more valuable to write the unit tests before writing the production code, too. If you fundamentally disagree that unit tests are useful, you can save yourself some time; have a nice day.

Everyone has some idea of what a “unit” of code is, so everyone has some idea of what a “unit test scope” is. The trouble is there are lots of different ideas about that. I’ve seen unit tests that cover an entire executable, a collection of collaborating objects, an end-to-end transaction, an entire batch jobstream, a series of steps in an ETL process, and all kinds of other things that are of large scope and that have lots of tightly-coupled dependencies. And no one is objectively “wrong” to call those things “unit tests,” because there’s no generally-accepted definition.

But there are a couple of ideas about unit tests that I think are pretty useful.

Michael Feathers

Michael Feathers came up with a set of guidelines for designing unit tests way, way back in the proverbial Mists of Time that goes like this:

“A test is not a unit test if:

- It talks to the database

- It communicates across the network

- It touches the file system

- It can’t run at the same time as any of your other unit tests

- You have to do special things to your enviroment (such as editing config files) to run it”

That’s from a 2005 article. This next one was published in 2018, but it isn’t a brand new idea.

Michael “GeePaw” Hill

This is GeePaw’s TDD Pro-tip #5: “No, Smaller”.

“In TDD, almost without exception, we want everything: a test, a method, a class, a step, a file, really, almost everything, to be as small as it can be and still add value to what we’re doing.”

If that sounds like a fairly loose definition, it’s only because it’s a fairly loose definition. It has to be. The smallest unit that still adds value to what we’re doing varies by programming language and available tooling for running test cases. Sometimes, it depends on just how you write the solution, too.

Test Scope: Java

Let’s consider Java, a language widely used for business application programming. I’ve used an example in the past of an iterative solution to the Fibonacci problem that I found on StackOverflow. Here’s a slightly-modified version of it:

public class FibIterative {

public int fib(int n) {

int x = 0, y = 1, z = 1;

for (int i = 0; i < n; i++) {

x = y;

y = z;

z = x + y;

}

return x;

}

public List fibSeries(int limit) {

List result = new ArrayList();

for (int i = 0 ; i < limit ; i++ ) {

result.add(fib(i));

}

return result;

}

}

If we wanted to write a unit test scope for that code, we could do it like this using JUnit:

@Test

public void first_10_values() {

List expected = Arrays.asList(new Integer[] { 0, 1, 1, 2, 3, 5, 8, 13, 21, 34 });

assertEquals(expected, fib.fibSeries(10));

}

That seems fine. If anything goes wrong with the implementation, that unit test scope will fail. But what if we apply GeePaw’s “No, smaller” guideline? Technically, that looks like this:

[1] “Is that small enough?”

[2] “No, make it smaller.”

Sorry if that was too technical.

The thing about the Fibonacci series is that the first couple of values are sort of predetermined, and from a certain point onward the values are calculated based on the preceding values. Granted, this is a bit trivial, but the pattern occurs in many more-realistic business application scenarios. We’d like to have unit test scopes that zero in on the exact part of the logic that’s incorrect. That saves us some analysis time, and also helps us plug and play different implementations without having to rip up our test suite. If the same tests pass before and after dropping in a new implementation, then we know the new behaves the same as the old. One less thing to worry about in this crazy old world.

With that in mind, we could write unit tests to validate the method that computes each number:

@Test

public void the_2nd_value_is_1() {

assertEquals(1, fib.fib(1));

}

@Test

public void the_5th_value_is_3() {

assertEquals(3, fib.fib(4));

}

If we decided to spot-check several values, we could write a data-driven unit test scope case that’s equivalent to a whole bunch of those little test cases (some boilerplate code omitted):

@Parameterized.Parameters(name = "{index}: Next value after {0} is {1}")

public static Iterable<object[]> data() {

return Arrays.asList(new Object[][] {

{1, 1},

{4, 3},

{9, 21},

{11, 55},

});

}

. . .

public FibonacciParameterizedTest(int after, int next) {

this.after = after;

this.next = next;

}

. . .

@Test

public void itComputesTheNextValueInTheFibonacciSeries() {

assertEquals(next, fib.fib(after));

}

Okay, those test cases are pretty small. Any smaller, and they wouldn’t be meaningful. But what if the production code were implemented differently? Here’s a Java implementation using a lambda expression:

public List generate(int series) {

return Stream.iterate(new int[]{0, 1}, s -> new int[]{s[1], s[0] + s[1]})

.limit(series)

.map(n -> n[0])

.collect(Collectors.toList());

}

Here we have a single line of code that produces the whole series. Do those little tiny unit test scopes still make sense? I don’t think so. This code doesn’t repeatedly call a method to calculate each value. All that work is handled by the iterate, limit, map, and collect methods. We needn’t test those separately because they’re supplied by the Java Development Kit; they aren’t our code. Were we to write the same logic in C, without a library, it would be a different story; but in this case the library is part of our tooling. (If you lack confidence that your tools work, then you have a different problem altogether.)

That first unit test scope we looked at would be the smallest meaningful one in this case. Something like this, maybe:

@Test

public void it_generates_the_first_10_values() {

List expected = Arrays.asList(new Integer[] { 0, 1, 1, 2, 3, 5, 8, 13, 21, 34 });

FibLambda fib = new FibLambda();

assertEquals(expected, fib.generate(10));

}

This meets GeePaw’s criteria for small enough, I think. We might be able to force the issue and come up with smaller unit test scopes here, but because of the way the production code is written, doing so wouldn’t yield any value. We’re testing a single line of source code. A lot of stuff happens when that code is executed, but we don’t directly control any of that and it isn’t split out in a way that enables us to test the pieces separately. So, we’re done.

Test Scope: COBOL

Most contemporary languages lend themselves nicely to unit testing frameworks. Traditional languages running on older platforms…not so much. What’s a “unit” in a mainframe environment? Unless you want to beat COBOL code into submission with your bare hands, maybe like this, you’ll be using IBM Rational Developer for zSeries with zUnit, Compuware Topaz Workbench, or rolling your own test harness. The smallest unit of code you’re likely to be able to run independently is a whole load module. (Kids: A “load module” is like an “executable.”)

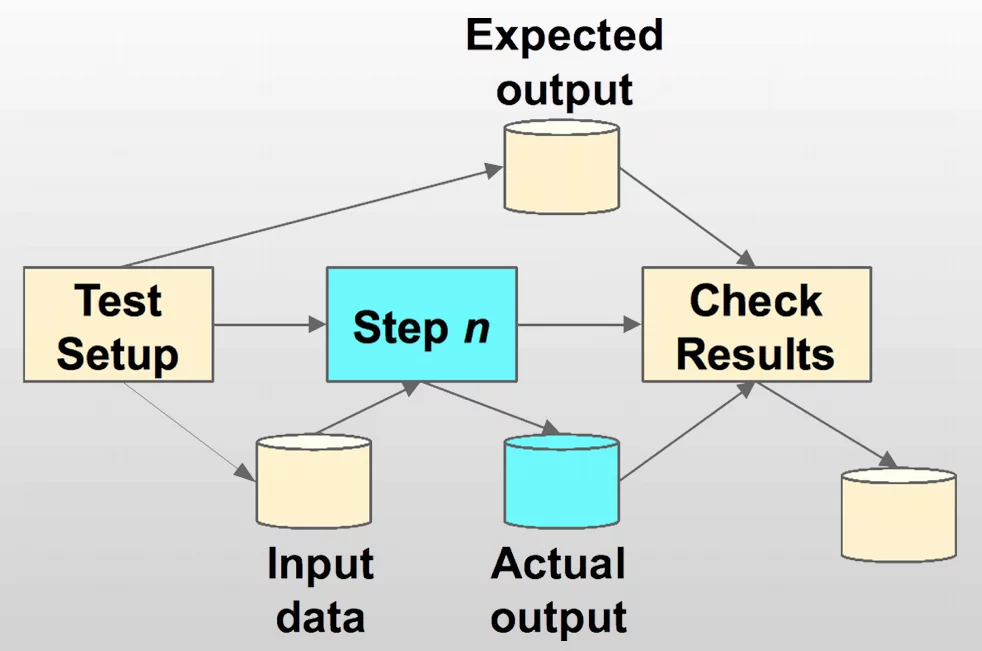

You don’t necessarily need special tooling. You can set up a batch test run more-or-less like this:

There’s nothing required besides standard JCL and utilities. And a lot of people wouldn’t blink if you called a whole batch program a “unit,” (in a Unit Test Scope) because that’s the smallest unit they’ve ever been able to work with anyway. The idea of executing a single COBOL paragraph in isolation would strike most mainframers as a little odd. I’ve even heard the word “impossible” used, although that’s not literally true.

So, if we can accept the idea that a whole load module is the smallest practical unit of code we can test in isolation, we’re good to go.

Except that we’re violating one of Michael Feathers’ principles: We’re touching the file system. We’re touching the heck out of the file system.

Mainframe batch programs generally run against files and databases. That’s what batch processes do, after all: They process large batches of data. And where does data live? In files and databases.

Could we mock that out? Well, maybe we could figure out a way to mock it out, some of the time. But as a practical matter, taking into account the realities of the execution environment, running a test job with test files that are fully under our control isn’t so bad. We’re not introducing dependencies on data sources outside our control, so the test cases are repeatable and consistent. That’s what matters, above and beyond rules and guidelines in our unit test scope.

I think it’s fair to call this a “unit test scope,” in context.

Test Scope: Microservices

What if we travel forward in time instead of backward? I’ve been reading and hearing a lot of people say there’s no need to unit test scope microservices, because they’re so small already that all we need to do is run API-level checks and we’re done.

As Ernest Hemingway might have said, “Isn’t it pretty to think so?” Oh, wait; he did say that. Now I’m saying it, too.

Depending on the programming language, how the logic is implemented, and just how “micro” the microservice really is (it’s one of those overused popular buzzwords, after all), it’s conceivable that there’s no smaller part to check than the whole service, but it’s more likely the case that there’s more than one line of code behind the API.

The fact the code is invoked over HTTP using a RESTful API doesn’t invalidate all the usual considerations for what is or isn’t a unit test and how small is small enough. If it’s Java or C# or Python or Ruby or something like that, there will be methods in there that we can (and should) validate individually.

Here’s a Reverse Polish Notation calculator service by Stacey Vetzal, written in JavaScript, with fine-grained unit tests written in Mocha. Here’s my implementation in Ruby, split out into three projects, one each for the core functionality, the service, and the UI, with unit test scopes in Rspec.

Both those solutions are dripping with microtests. So, it looks like you can follow Feathers’ and Hill’s guidelines for microservices, after all.

Unit Test Scope: Embedded Systems and IoT

I’m going to gloss over the details here, as the basic information has already been covered. There’s nothing magical or fundamentally different about designing and building software for embedded systems and the Internet of Things.

It’s advisable to separate concerns, keeping code that performs core functionality separate from code that interacts with external interfaces.

A lot of the code is in the nature of a finite state machine, taking an action when the device is in a given state and a certain event is detected. The usual design considerations for this category of problem apply. Depending on how complicated the state machine is, you can choose from a range of implementation approaches from a switch statement to the state pattern.

In any case, we’ll usually prefer to isolate the functionality for handling each state in small methods, functions, or subroutines that lend themselves nicely to isolated microtesting and testing the unit test scope. There is no reason not to design the code in this way. There are reasons why it is not usually designed in this way, but those reasons are not technical.

The rules of thumb for writing unit tests for embedded and IoT solutions are the same as those for conventional applications: A unit test scope doesn’t interact with external dependencies (Feathers) and its scope is as small as we can make it without losing the value the test case provides (Hill).

There may be a few differences in the details, depending on context. For test-driving embedded solutions, we’ll usually do development on a different hardware platform than the target. Our quick TDD cycle will happen on the development environment. An additional step beyond the standard red-green-refactor cycle to compile with the compiler settings for the target environment can expose integration problems early in the TDD cycle.

Test-driving or unit testing IoT solutions usually involves two types of tests: Unit test scopes, which are no different from unit tests in any other environment, and instrumentation tests, which mock out the input signals from the device. The instrumentation tests are conceptually equivalent to UI tests for applications that present a user interface or API tests for services. Here’s a nice article by Nilesh Jarad on test-driving Android applications.

Conclusion

There are those who disagree, and some of them are pretty darn smart, but if you ask me we should write executable checks or tests at the unit/micro level that follow Feathers’ and Hill’s guidelines, regardless of the domain or technology stack we’re using. My experience, along with that of many thousands of other developers, is that the benefits outweigh the costs by a large margin in unit test scopes.