Small Agile User Stories Reduce Variability in Velocity, Improve Predictability

If you estimate the size of your Agile user stories in relative story points, realize that the more large stories you have in your backlog, the less reliable will be your velocity (trend) for planning future work. The velocity trend values will be less accurate when there are bigger stories in your backlog, than when the user stories are smaller. The less accurate velocity trend is not a good basis for future planning, i.e., as a planning velocity for the next release. The reason for this lies in the nature of relative story point estimation, and there is some statistics to back it up too. Let us delve into this.

By design, relative story point estimates are increasingly less accurate for larger estimates. The Fibonacci sequence (well, a modified version of it: 1,2,3,5,8, 13, 20, 40, 100) is used as the point scale to model this. The idea is that a story estimated to be size 3 is likely to be in the small range of 2 to 5 actual points (if we were to measure actual points – which we don’t, but that’s another story). Whereas, a story estimated to be size 13 could be anywhere from size 8 to 20 in “actual” size, and so on up the scale.

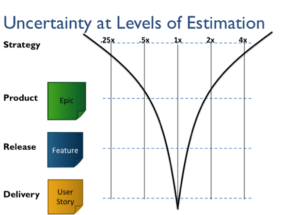

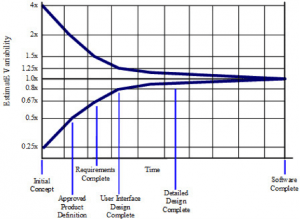

This model of estimation accuracy for story size is analogous to the “Cone of Uncertainty” (CoU) concept for software development projects. The CoU describes the exponentially decreasing uncertainty in project estimates as the project progresses. The CoU shows that the uncertainty decreases as more details about the actual requirements, design and implementation become known. “Estimates created at Initial Concept time can be inaccurate by a factor of 4x on the high side or 4x on the low side (also expressed as 0.25x, which is just 1 divided by 4).” [Construx public web page]. As the project progresses, estimates become increasingly certain until, for example, the day before the actual project completion date, that date is nearly 100% known. A diagram of the Cone of Uncertainty is shown below.

In an analogous way, early on in a project, work is not broken down into detail – we have epics and features. As the project progresses, we refine the backlog by breaking the epics and features down into agile user stories (and estimating their size). This is depicted in the diagram below.

Agile User Stories : Relative Story Point Estimates

Getting back to relative story point estimates, think about the following. The larger the estimated Agile user stories sizes, the more error there can be in that estimate compared to the “actual” size of the Agile user stories, due to the Cone of Uncertainty effect, which is modeled by the Fibonacci (or other non-linear) point scale. For example, a story estimated to be a 13 could be just a bit bigger than another story which is truly an 8, or just a bit smaller than a size 20 story. An 8-er could be anywhere from a 5 to a 13, and so on. As you can see, this estimation error is bigger for larger stories. When you add the estimated Agile user stories sizes to calculate the velocity, this error adds up. An achieved velocity of say, 30, with mostly small Agile user stories, is something different from a velocity of 30 achieved with larger Agile user stories. Which of these 30s would you have more confidence in using to forecast future velocity?

Adding the caveat, “on average”, would probably be the more accurate way to make some of the above statements. Agile user stories estimated to be size 13 could on average (meaning over many Agile user stories estimated to be 13) be anywhere from an actual size of 8 to 20. And what of this notion of “actual size”? We don’t measure that, and these are all relative sizes, right? But there must be some actual size once the story has been delivered. Whatever it is, whatever its units – Lines of Code, Function Points, some function of effort, complexity, and risk – it does exist, and all Agile user stories, once completed, have an actual size. We don’t have to know that actual size or units to make this argument about the error in the estimates and its effect on velocity.

This can be explained in terms of statistics as well. For those not interested in the math, you can skip ahead two paragraphs. I believe the case has been made for smaller Agile user stories in your backlog reducing variability in your velocity.

If we think of the error in any particular story size estimate (versus the “actual” size) as a random variable (i.e., over all Agile user stories estimated to be a particular size, say a 5), then the variance on that error is bigger for larger sized Agile user stories, due to the CoU effect. When the story sizes are summed, the variance of the error in the resulting sum (the velocity) is the sum of the variances (assuming for simplicity that the story size estimate errors are independent and normally distributed, which they probably aren’t, but my hunch is it – the variance- only gets worse/ bigger for other distributions). This statistical property may be difficult to perceive on any one Scrum-team-sized project, but think about it across an entire large organization of many, many projects over time. The statistics play out over the large number of samples. The more large Agile user stories that are in the sprint backlogs across the organization, the more variance will be in the velocity and thus the less reliable will be release commitments based on those velocities.

This strongly suggests that you (and your organization) will be much better off in terms of forecasting your rate of progress (velocity) for planning purposes if you have mostly small stories in your backlog. Not only does the math tell us this, but it passes the common sense test too. If you have a mix of small to medium and large stories in your backlog, all it takes is one or two of larger stories planned into a sprint not completing to significantly reduce your velocity from what you planned. The next sprint you may spike up in velocity due to taking credit for the large “hangover” story(s). If this pattern repeats sprint over sprint, your velocity will vary widely and predictions based on it will not be very reliable. On the other hand, if you have mostly small user stories in your sprints’ backlogs, then if the team occasionally does not finish a story this will not impact the velocity statistics significantly… the average velocity should be reliable enough for forecasting.

Ideally then, we would break all Agile user stories down to about the same small size and forget about sizing them – just count them and use count for velocity. However, in practice I have found this is not practical – there are usually constraints on how / much we can break down Agile user stories. Furthermore and perhaps more importantly, in practice we find that the discussions around the story size estimation tend to be very valuable in terms of developing a clear, shared understanding of the Agile user stories.

Summary

The moral of the story is… break down those Agile user stories, or more generally, break down your work into as small chunks as is practical. There are many benefits of working with small Agile user stories besides the reduced variability. These advantages include: increased focus, which helps prevent failure; earlier discovery / faster feedback; shorter lead time/ better throughput; and reduced testing overhead. Those are good candidates for the subject of future articles.

A corollary arising from this observation is that if you cannot break down all your Agile user stories to be relatively small, realize that velocity may not be a reliable planning parameter. You might want to also look at story cycle time (per size), for example. In addition, the average story size (velocity/num_stories) could be tracked and monitored. The idea would be that lower is better – velocity will be more reliable for planning when there is lower average story size in the historical backlog.

To help improve your user stories read 10 Tips For Better Story Estimation by Jann Thomas.

Comments (10)

George Dinwiddie

Hi, Doug,

I agree with you on making stories small, but there are some details that disturb me about your article.

First of all, that Cone of Uncertainty is quite uncertain, itself. See https://leanpub.com/leprechauns for a deeper discussion, but it could be, for example, that our “detailed design” ADDS variability rather than reduces it. The drawing is a conceptual model, not an empirical one. I suspect that life doesn’t follow it nearly as well as people would like.

Second, you say, “a story estimated to be a 13 could be just a bit bigger than another story which is truly an 8, or just a bit smaller than a size 20 story.” Actually, a story estimated to be a 13 could be smaller than an 8, or larger than a 20. These are just estimates, after all. When teams are putting a lot of emphasis on story points I often hear them wanting to re-estimate them in retrospect.

Third, you say “you (and your organization) will be much better off in terms of forecasting your rate of progress (velocity) for planning purposes if you have mostly small stories in your backlog.” I once thought this, but have found it not to be true. Sure you can size small stories more easily. But are they the right stories? I go into more detail on this problem in http://blog.gdinwiddie.com/2014/01/18/long-range-planning-with-user-stories/

Fourth, you say that breaking down stories even smaller and counting them is not practical. I disagree. If you determine the acceptance scenarios needed to verify the story, you can break it down in those slices. If those are still big, you may have missed some scenarios. You can also make simplifying assumptions for small stories, assumptions that will be clarified and expanded in later stories. While /you/ may be having difficulty breaking stories smaller, that doesn’t mean it’s not practical. The fact that people can work this way is an existence proof of practicality. Yes, there is variance in the effort, but comparable (or perhaps less) than bigger stories that are estimated.

Keep pushing,

George

Hi George, thank you for reading and commenting on my post. To be honest, I did think that the CoU numbers (+/- 4x, etc) are based on empirical data from some studies done way back when. Since the numbers roughly match my experience (on the high side anyway), I just accept that they are close enough to use as a model. So thanks for pointing me to the Leprechaun book, which apparently clears that up. But my referencing of the CoU in this piece was for the concept only, not the numbers. Unfortunately the charts I used have the numbers. So I understand your protest. However, I think I could have made my case without reference to the CoU at all. I’m glad I did though, since it led to me (and hopefully our readers) to learning something new from your comment!

Quoting your comment:

“Second, you say, “a story estimated to be a 13 could be just a bit bigger than another story which is truly an 8, or just a bit smaller than a size 20 story.” Actually, a story estimated to be a 13 could be smaller than an 8, or larger than a 20. These are just estimates, after all. When teams are putting a lot of emphasis on story points I often hear them wanting to re-estimate them in retrospect.”

Agreed that these are just estimates. I don’t mean to suggest accuracy or precision in the estimates. I would suggest though that there is a lower probability that a story estimated to be size 13 is smaller than an 8 or bigger than 20 (than of it being between 8 and 20). There are probability distributions around these estimates. The Fibonacci sequence (or any non-linear scale such as geometric) is used to model the decreasing accuracy (wider distributions of error) for larger estimates.

“Third, you say “you (and your organization) will be much better off in terms of forecasting your rate of progress (velocity) for planning purposes if you have mostly small stories in your backlog.” I once thought this, but have found it not to be true. Sure you can size small stories more easily. But are they the right stories? I go into more detail on this problem in http://blog.gdinwiddie.com/2014/01/18/long-range-planning-with-user-stories/”

I am not saying we need to break down all of the work/ requirements for an upcoming release in the release planning phase in order to estimate more accurately than we could otherwise how much scope (points) there are for that next release. (Although I am a proponent of doing so for up to 3 months worth of backlog). I am only saying that projections of future velocity based on historical velocity will be more reliable when the historical velocity is associated with a backlog of small stories. The smaller the stories, the more reliable the historical velocity as a predictor of future velocity. I’m still not completely happy with the wording I’m coming up with to express this, but bear with me.

“Fourth, you say that breaking down stories even smaller and counting them is not practical. I disagree. If you determine the acceptance scenarios needed to verify the story, you can break it down in those slices. If those are still big, you may have missed some scenarios. You can also make simplifying assumptions for small stories, assumptions that will be clarified and expanded in later stories. While /you/ may be having difficulty breaking stories smaller, that doesn’t mean it’s not practical.”

By “not practical” I meant that teams I’ve worked with (which are typically new or newer to Agile) generally have a hard time breaking _all_ the work down to small story size (and still have them be good stories, in terms of the INVEST criteria). There are at least some large stories that the team just struggles to slice further. It’s hard work and requires a special skill. I agree wholeheartedly that acceptance criteria/ scenarios are a great way to slice large stories. We (LeadingAgile) include this along with several other strategies for slicing features and large stories in our “How to Write Better Stories” training class. Getting back to the practicality point… if most of the team’s stories are small, and large stories are outlier exceptions, then the velocity statistics should be able to absorb / average out the error in the estimates of the large stories.

“The fact that people can work this way is an existence proof of practicality. Yes, there is variance in the effort, but comparable (or perhaps less) than bigger stories that are estimated.”

I’m sorry I don’t understand this.

Thanks again for your comment.

Eddie

It’s a minor quibble, but rather than “relative story point estimates are increasingly less _accurate_ for larger estimates” I think it might be better to say “relative story point estimates are increasingly less _precise_ for larger estimates, and intentionally so”.

It is also important to recognise that story point estimates are a subjective measure of the team; they only have a true meaning within the context of the specific team and where they are on their journey. I think it is therefore unwise to decouple estimation from planning – even with a sized product backlog they still need to double check during sprint planning that they are confident delivering the totality of stories they have selected for the sprint, not just add up the points and confirm the sum is the same or less than their velocity. A sprint backlog of many small stories is a very different beast from one of equivalent total size but with quite a few large stories; however, that doesn’t necessarily mean the team should only ever agree to sprint backlogs of small stories, or accept any type of backlog so long as it doesn’t exceed their velocity. An agile team builds incrementally _and_ iteratively so they have the means and opportunity to deal with a product backlog consisting of a wide range of story sizes, learning and adapting as they go.

Eddie, thanks for your comment. I like the points you are making. I believe you are saying that teams should not blindly commit to a certain number of points in a sprint based on historical velocity, even if they have all small stories. And, just because they have some large stories, that shouldn’t stop them from committing either. Agreed. The velocity (trend) is just one factor. The collective, shared understanding of the team making mindful decisions should over ride this and any other “mechanical” data points (provided, they have a strong rationale to do so). Did I interpret your points about right?

Tom Henricksen

This is great information and thanks for sharing it! I have had a few discussions with Agile coaches about this. It seems to validate what a lot of us were thinking.

Tom, I’m glad to hear that. Thanks for your comment.

Mike Cottmeyer

George… I disagree with your analysis of Doug’s post. Our practice is mostly centered around helping large complex, non-agile organizations form teams, select and break down the right work, and interact effectively with processes and groups that are upstream and downstream from the Scrum teams doing the delivery. In this context, I believe Doug’s points were valid.

Doug used the ‘cone of uncertainty’ as a metaphor to illustrate that we know less early than we do late. That should be generally true, it is what we strive for… if it’s not true… then you have no idea what your tracking toward and no idea when you are going to done.

I’d suggest that if you are going to allow that much variability in your estimates, even using story points, and are constantly re-estimating, and thus re-baselining, again… you have no idea what you are tracking toward or any way to communicate with people what they are going to get when you run out of time and money.

In our experience, smaller is better with user stories. We teach teams how to do appropriate forward planning, active risk management, and how to make tradeoffs along the way so that estimates get better over time. When you are actively managing your backlog, you would expect that estimates, at least estimate trends should begin to converge on reality. Again, if not… you have no way of communicating what your business people are going to get when you run out of time and money. We have not given up on estimation and feel it is a critical part of the process… that said, lots of things in the organization have to be worked through for estimation to work.

Finally, I’d suggest that there is a practical limit to what’s reasonable around breaking up stories. I agree with Doug that there is a point where breaking something up just because you can, doesn’t actually help the process.

There is clearly nuance to everything that each of us put out in the community. We are all trying to solve problems for our particular customers based on our own set of experiences. Just because your experiences are different from the kinds of things Doug and I are working in every day… doesn’t make them invalid or wrong. It might not make them applicable in your context. They are perfectly valid in our context.

Candidly, I felt like your comment was a little snarky, dogmatic, and condescending. I think Doug’s has a valid point of view, especially in the large enterprise contexts we are working in. If you have any absolute data, that goes past personal experience or opinion, that proves Doug wrong in our context, I’d be happy for you to post it.

That said, thanks for your comment. I’m breathing now ;-)

Clinton Keith

Good article. The comments were interesting and highlighted that our experiences with different teams working on different types of products can result in different experiences. To interpret anything in an article or comment as saying “this is the one way to do it, because it worked for me” creates barriers and violent communication. I struggle with not trying to cast things that way.

Thanks for the article!

Eddie

Hi Doug, absolutely! You put it better than I did. Funnily enough I had this exact situation on Friday while helping a team with sprint planning. They had got caught up with their velocity number and started going down the rabbit hole trying to convince themselves that their sprint backlog could be done as it matched the velocity of the last sprint. I helped them come back to reality by asking them to explore their plan for delivering the sprint; when they investigated _how_ they would work as a team their confidence in committing to the sprint increased but they also recognized that there was a lot of complexity involved and hence risk, which they would need to be mindful of throughout sprint execution. At the core of their challenges, though, is the fact that their stories are probably too big so flow is lumpy and velocity volatile, making sprint planning usually very difficult. They are a mature team so can cope with large stories (and sometimes don’t have a choice), but shouldn’t take on so many in every sprint that they unnecessarily increase risk and uncertainty.

Lianhau Lee

I do agree to split story into reasonable smaller stories, but to the contrary of common understanding, the “relative” variance of smaller stories is not going to be smaller.

A story can be characterized by its complexity. When a story is small, its ‘absolute’ variance is small as well, e.g. 1 point story variance could be in term of 1 day of work, whereas a 13 point story variance could be in term of weeks. However, when we look at the relative variance of the story, we observe what is contrary to common belief:

1 point story variance 110%

2 point story variance 89%

3 point story variance 70%

This could be understood easier with actual data: 1 point story cover work that span 0-1+ day of work, the relative variance is larger compared to 3 point story which cover work that could span 2-4 days of work in actual delivery. So what’s actually happening here? Although the absolute variance in small story is small, but since the size of the story itself is small as well, the relative variance stay relatively the same or could be larger as what we see above.

Relative variance = absolute variance / size of story

When the size of story is small, and the absolute variance stay relatively constant, the denominator is the dominating factor to relative variance.

On the other hand, the relative variance of large story is dominated by the nominator – ie the sheer larger amount of unknown factors. Hence, when the story size become larger, the relative variance will become larger as well.

Hence, it is no good if you have all stories in 1 point, as you end up high variance. Neither you want to have all big stories, as you lose agility. Rather, you need a natural blend of stories of different sizes.

With that said, if traceability (the ability to see the incremental progress everyday) is all you are looking for, go for all 1 point story, in the expense of predictability (ability to predict the delivery date).

One possible way to circumvent the high relative variance in small point story is through more granular definition of story point, eg 1 point mean 1-2hr of work, 2 point mean half day work, 3 point mean 1 day work, 5 point mean 2 day work etc.

But as the story getting larger, story point estimation based on time is getting to the nerve of developer, and we could turn into complexity scale, eg pick a story with 5 point (or 2 day worth of work) as baseline, and all “large” story will compare against this in term of complexity, and label according with 5, 8, 13 etc.

With proper project management tool which could derive the times from the corresponding story points, we could then have high predictability, agility, and happy developers.